学校网站建设哪家好百分百营销软件官网

PyTorch 实现 MNIST 手写数字识别

MNIST 是一个经典的手写数字数据集,包含 60000 张训练图像和 10000 张测试图像。使用 PyTorch 实现 MNIST 分类通常包括数据加载、模型构建、训练和评估几个部分。

数据加载与预处理

使用 torchvision 加载 MNIST 数据集,并进行归一化和数据增强(可选)。以下是数据加载的示例代码:

import torch

from torchvision import datasets, transformstransform = transforms.Compose([transforms.ToTensor(),transforms.Normalize((0.1307,), (0.3081,))

])train_dataset = datasets.MNIST('data', train=True, download=True, transform=transform)

test_dataset = datasets.MNIST('data', train=False, transform=transform)train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=64, shuffle=True)

test_loader = torch.utils.data.DataLoader(test_dataset, batch_size=1000, shuffle=False)

构建模型

定义一个简单的卷积神经网络(CNN)模型:

import torch.nn as nn

import torch.nn.functional as Fclass Net(nn.Module):def __init__(self):super(Net, self).__init__()self.conv1 = nn.Conv2d(1, 32, 3, 1)self.conv2 = nn.Conv2d(32, 64, 3, 1)self.fc1 = nn.Linear(1024, 128)self.fc2 = nn.Linear(128, 10)def forward(self, x):x = F.relu(self.conv1(x))x = F.max_pool2d(x, 2)x = F.relu(self.conv2(x))x = F.max_pool2d(x, 2)x = torch.flatten(x, 1)x = F.relu(self.fc1(x))x = self.fc2(x)return F.log_softmax(x, dim=1)

训练模型

定义优化器和损失函数,并进行训练:

model = Net()

optimizer = torch.optim.Adam(model.parameters(), lr=0.001)

criterion = nn.CrossEntropyLoss()def train(model, device, train_loader, optimizer, epoch):model.train()for batch_idx, (data, target) in enumerate(train_loader):data, target = data.to(device), target.to(device)optimizer.zero_grad()output = model(data)loss = criterion(output, target)loss.backward()optimizer.step()if batch_idx % 100 == 0:print(f'Epoch: {epoch}, Loss: {loss.item():.4f}')

测试模型

在测试集上评估模型性能:

def test(model, device, test_loader):model.eval()test_loss = 0correct = 0with torch.no_grad():for data, target in test_loader:data, target = data.to(device), target.to(device)output = model(data)test_loss += criterion(output, target).item()pred = output.argmax(dim=1, keepdim=True)correct += pred.eq(target.view_as(pred)).sum().item()test_loss /= len(test_loader.dataset)print(f'Test Accuracy: {correct}/{len(test_loader.dataset)} ({100. * correct / len(test_loader.dataset):.2f}%)')

完整训练循环

将训练和测试整合到一个完整的循环中:进行10次训练

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model.to(device)for epoch in range(1, 10):train(model, device, train_loader, optimizer, epoch)test(model, device, test_loader)

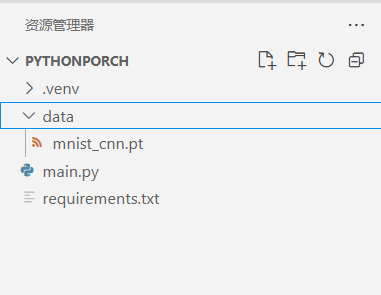

模型保存与加载

训练完成后,可以保存模型:

torch.save(model.state_dict(), 'mnist_cnn.pt')

加载模型:

加载模块

model = Net()

model.load_state_dict(torch.load('mnist_cnn.pt'))

model.eval()

或者是Mnist其他源代码

Mnist main.py

import argparse

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

from torch.optim.lr_scheduler import StepLRclass Net(nn.Module):def __init__(self):super(Net, self).__init__()self.conv1 = nn.Conv2d(1, 32, 3, 1)self.conv2 = nn.Conv2d(32, 64, 3, 1)self.dropout1 = nn.Dropout(0.25)self.dropout2 = nn.Dropout(0.5)self.fc1 = nn.Linear(9216, 128)self.fc2 = nn.Linear(128, 10)def forward(self, x):x = self.conv1(x)x = F.relu(x)x = self.conv2(x)x = F.relu(x)x = F.max_pool2d(x, 2)x = self.dropout1(x)x = torch.flatten(x, 1)x = self.fc1(x)x = F.relu(x)x = self.dropout2(x)x = self.fc2(x)output = F.log_softmax(x, dim=1)return output#训练模型

def train(args, model, device, train_loader, optimizer, epoch):model.train()for batch_idx, (data, target) in enumerate(train_loader):data, target = data.to(device), target.to(device)optimizer.zero_grad()output = model(data)loss = F.nll_loss(output, target)loss.backward()optimizer.step()if batch_idx % args.log_interval == 0:print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(epoch, batch_idx * len(data), len(train_loader.dataset),100. * batch_idx / len(train_loader), loss.item()))if args.dry_run:break#测试模型

def test(model, device, test_loader):model.eval()test_loss = 0correct = 0with torch.no_grad():for data, target in test_loader:data, target = data.to(device), target.to(device)output = model(data)test_loss += F.nll_loss(output, target, reduction='sum').item() # sum up batch losspred = output.argmax(dim=1, keepdim=True) # get the index of the max log-probabilitycorrect += pred.eq(target.view_as(pred)).sum().item()test_loss /= len(test_loader.dataset)print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(test_loss, correct, len(test_loader.dataset),100. * correct / len(test_loader.dataset)))#主函数 MNIST Example的主函数

def main():# Training settingsparser = argparse.ArgumentParser(description='PyTorch MNIST Example')parser.add_argument('--batch-size', type=int, default=64, metavar='N',help='input batch size for training (default: 64)')parser.add_argument('--test-batch-size', type=int, default=1000, metavar='N',help='input batch size for testing (default: 1000)')parser.add_argument('--epochs', type=int, default=14, metavar='N',help='number of epochs to train (default: 14)')parser.add_argument('--lr', type=float, default=1.0, metavar='LR',help='learning rate (default: 1.0)')parser.add_argument('--gamma', type=float, default=0.7, metavar='M',help='Learning rate step gamma (default: 0.7)')parser.add_argument('--no-accel', action='store_true',help='disables accelerator')parser.add_argument('--dry-run', action='store_true',help='quickly check a single pass')parser.add_argument('--seed', type=int, default=1, metavar='S',help='random seed (default: 1)')parser.add_argument('--log-interval', type=int, default=10, metavar='N',help='how many batches to wait before logging training status')parser.add_argument('--save-model', action='store_true', help='For Saving the current Model')args = parser.parse_args()use_accel = not args.no_accel and torch.accelerator.is_available()torch.manual_seed(args.seed)if use_accel:device = torch.accelerator.current_accelerator()else:device = torch.device("cpu")train_kwargs = {'batch_size': args.batch_size}test_kwargs = {'batch_size': args.test_batch_size}if use_accel:accel_kwargs = {'num_workers': 1,'pin_memory': True,'shuffle': True}train_kwargs.update(accel_kwargs)test_kwargs.update(accel_kwargs)transform=transforms.Compose([transforms.ToTensor(),transforms.Normalize((0.1307,), (0.3081,))])dataset1 = datasets.MNIST('../data', train=True, download=True,transform=transform)dataset2 = datasets.MNIST('../data', train=False,transform=transform)train_loader = torch.utils.data.DataLoader(dataset1,**train_kwargs)test_loader = torch.utils.data.DataLoader(dataset2, **test_kwargs)model = Net().to(device)optimizer = optim.Adadelta(model.parameters(), lr=args.lr)scheduler = StepLR(optimizer, step_size=1, gamma=args.gamma)for epoch in range(1, args.epochs + 1):train(args, model, device, train_loader, optimizer, epoch)test(model, device, test_loader)scheduler.step()if args.save_model:torch.save(model.state_dict(), "mnist_cnn.pt")if __name__ == '__main__':main()带命令的Mnist函数

python main.py --help

usage: main.py [-h] [--batch-size N] [--test-batch-size N] [--epochs N] [--lr LR] [--gamma M] [--no-accel][--dry-run] [--seed S] [--log-interval N] [--save-model]PyTorch MNIST Exampleoptional arguments:-h, --help show this help message and exit--batch-size N input batch size for training (default: 64)--test-batch-size N input batch size for testing (default: 1000)--epochs N number of epochs to train (default: 14)--lr LR learning rate (default: 1.0)--gamma M Learning rate step gamma (default: 0.7)--no-accel disables accelerator--dry-run quickly check a single pass--seed S random seed (default: 1)--log-interval N how many batches to wait before logging training status--save-model For Saving the current Model数据目录

注意事项

- 确保安装了 PyTorch 和 torchvision 库。

- 可以根据硬件条件调整

batch_size。 - 模型结构和超参数(如学习率)可以根据需求调整。

- 使用 GPU 可以显著加速训练。